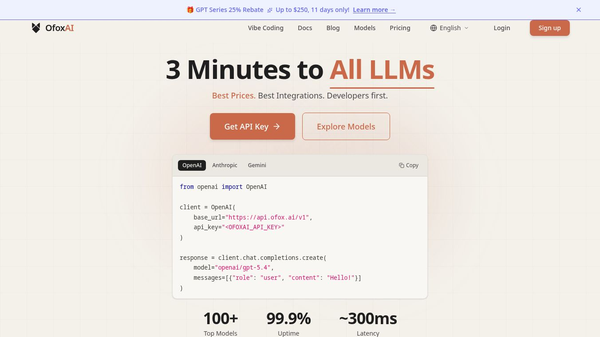

TUEN is a fast and reliable platform for running artificial intelligence models. It is built on special computer hardware that allows it to process data without any delays. The system is designed to handle many requests at once while keeping the response time extremely low. This tool helps developers and businesses get instant results from AI models without waiting for servers to warm up or face long queues.

Benefits

The main advantage of TUEN is its incredible speed. It can handle requests in just a few milliseconds. The platform guarantees that the system is always ready to work, so there is never a cold start delay. It supports a wide variety of AI tools including image creation, text generation, speech synthesis, and video production. Because it uses custom software on dedicated hardware, it avoids the slowdowns that often happen with virtual machines. Users get high uptime rates and can scale their operations easily across multiple regions.

Use Cases

Developers can use TUEN to build applications that need real-time AI responses. For example, a website might use the platform to generate images instantly when a user uploads a prompt. Another use case is creating chatbots that reply to customers within seconds. Businesses can also use it for audio transcription services or converting text into spoken words for accessibility tools. The platform is suitable for anyone who needs to deploy AI models quickly and reliably without managing complex infrastructure.

Pricing

The platform allows users to start immediately without needing a credit card. There is no specific pricing plan mentioned in the available information, but the service is designed to be accessible for immediate testing and deployment.

Vibes

The community surrounding TUEN is active and focused on building scalable AI solutions. Many developers are currently using the platform to ship production-grade applications. Users appreciate the ease of use and the lack of friction in getting started. The real-time code playground feature is well received by the developer community as it allows for instant feedback and testing.

Additional Information

The platform operates with a team of active contributors who are shipping inference at scale. It utilizes a mix of high-performance GPUs to ensure top-tier performance. The system is available in five different global regions to minimize latency for users worldwide. The latest version of the inference engine includes significant improvements to speed and reliability.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.