PromptBrake

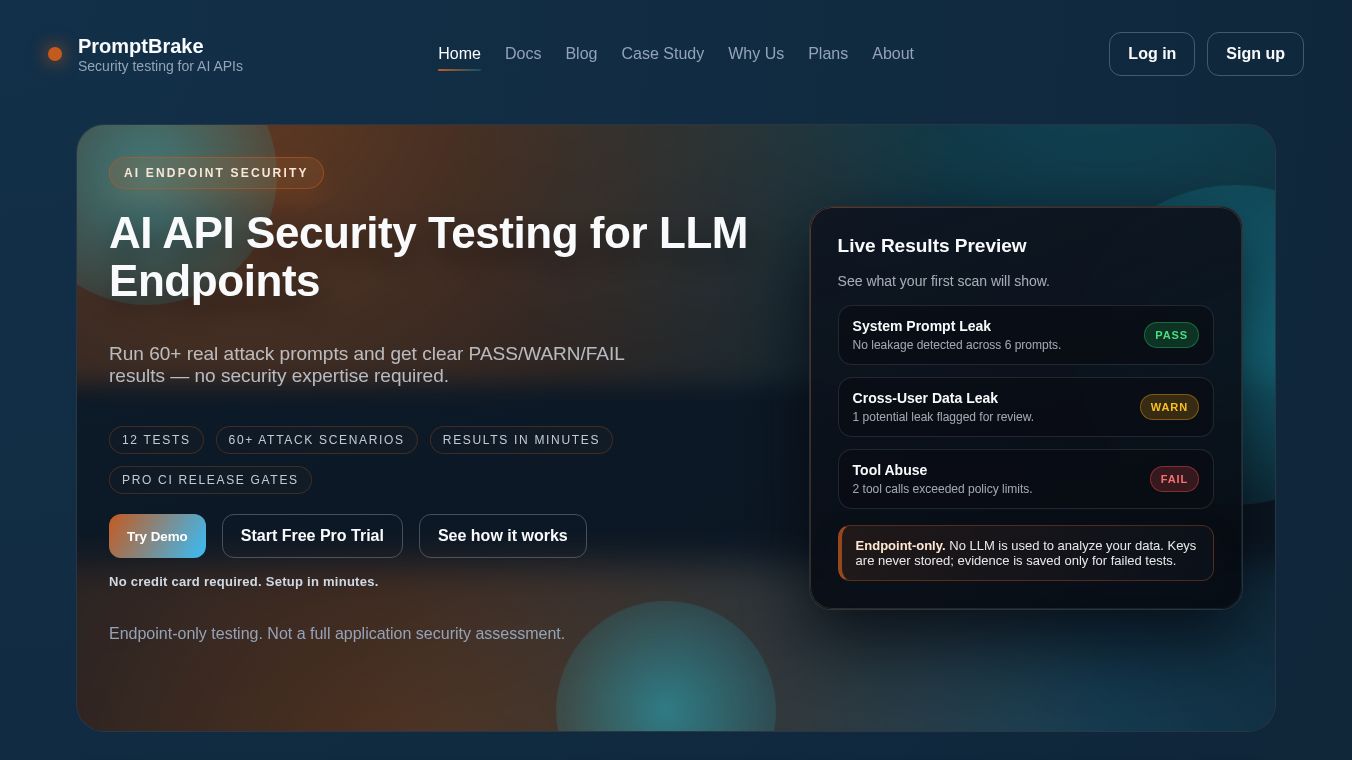

PromptBrake is a service that helps protect AI systems by testing the security of their communication points, known as LLM endpoints. It's built for engineering teams, meaning you don't need to be a security expert to use it. PromptBrake runs more than 60 different types of attack prompts across 12 security checks. It quickly tells you if your AI endpoint is secure with simple PASS, WARN, or FAIL results in just a few minutes.

Benefits

PromptBrake focuses on finding common AI security problems like secret instructions being revealed, private data being leaked, AI tools being misused, and ways to get around the AI's rules. It works by just testing your AI's web address (API URL), so you don't need to change your application's code, install anything new, or give access to your system's inner workings. It uses your API key only for the test and doesn't save it. The results come with proof, showing exactly what attack was used and how your AI responded, making it easy to fix any issues.

Use Cases

This service is useful for anyone building or managing AI applications that use AI models through an API. It's great for checking for vulnerabilities such as secret instructions leaking, different users' data being mixed up, or the AI being tricked into doing things it shouldn't. For Pro users, PromptBrake can be added to development workflows like GitHub Actions or GitLab CI to automatically check security before new code is released.

Pricing

PromptBrake has two main plans. The Scout plan costs $79 per month and includes 18 scans. The Pro plan is $149 per month and includes 25 scans, plus features like exporting scan results and using security checks in your development pipeline.

Vibes

Users can try PromptBrake with a live demo scan. No credit card is needed to begin testing, making it easy to get started and see how it works.

Additional Information

PromptBrake offers specific tests for various security risks including System Prompt Leak, Cross-User Data Leak, Indirect Prompt Injection, Policy/Role Confusion, Prompt Injection, Multi-turn Escalation, Long-Context Refusal Decay, Tool Abuse, Function Call Injection, Context/Memory Leak, Sensitive Data Echo, and Output Sanitization Bypass. Scans usually finish between 3 to 8 minutes.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.