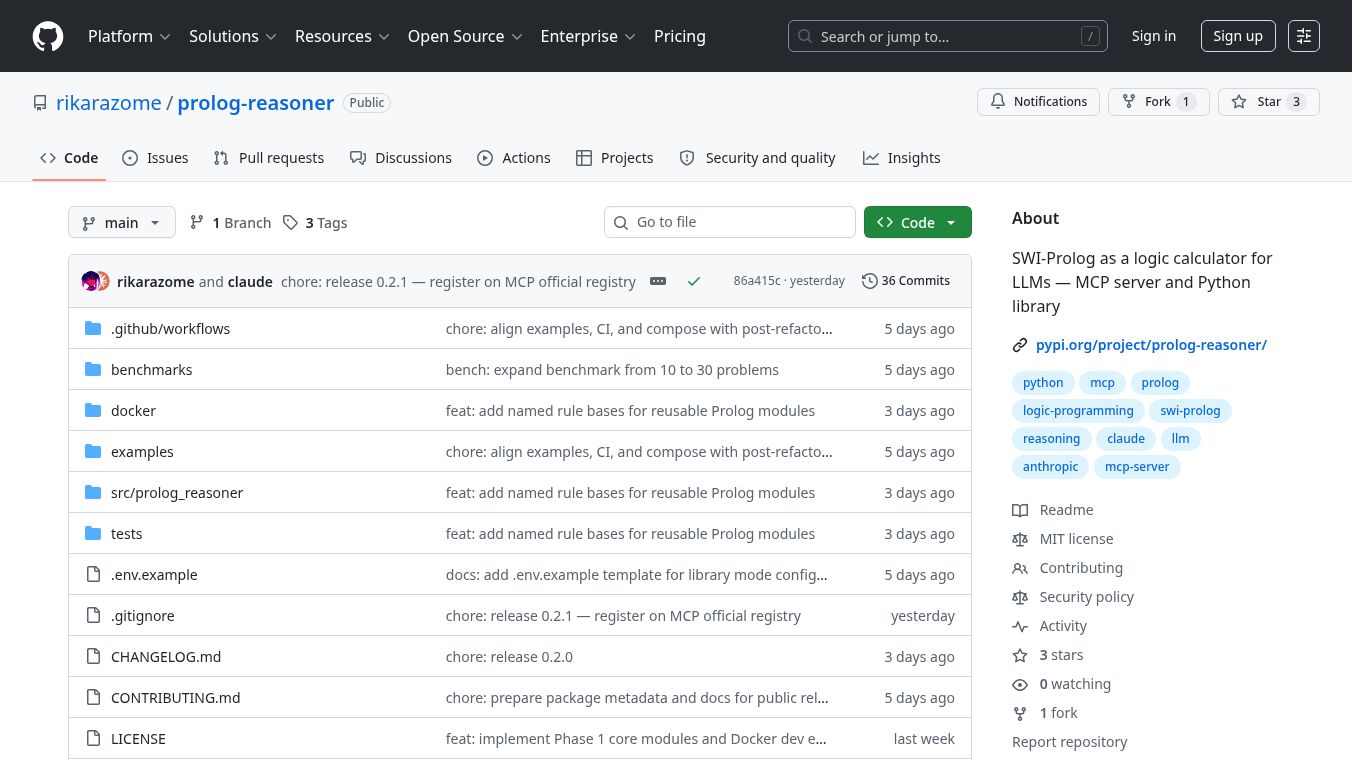

prolog-reasoner

Theprolog-reasonerproject helps large language models (LLMs) like ChatGPT or Claude to perform logical reasoning. LLMs are great at understanding and generating text but often struggle with complex logic. Prolog is a programming language that is excellent at logic but cannot understand natural language.prolog-reasoneracts as a bridge, allowing LLMs to use Prolog's logical power.

Benefits

Usingprolog-reasonerwith an LLM significantly improves accuracy on logic problems. In tests, a combination of an LLM andprolog-reasonersolved 90% of logic problems correctly, compared to only 73.3% solved by the LLM alone. This improvement is especially noticeable in tasks that involve solving puzzles with many rules or figuring out steps in a sequence. It also makes the reasoning process clear and easy to check. When something goes wrong, you can see the exact Prolog code that caused the problem and why it failed.

Use Cases

prolog-reasonercan be used in two main ways. The first is as an MCP Server, which lets LLMs callprolog-reasonerto solve logic problems during a conversation. This mode is useful for having stable rules, like the rules of chess or legal principles, that the LLM can refer to by name. The second way is as a Python Library. This allows you to turn natural language questions directly into Prolog code, with a system that can fix its own mistakes. This library mode requires an API key for services like OpenAI or Anthropic.

Vibes

Early results show that combining LLMs withprolog-reasoneroffers a significant boost in logical problem solving, particularly for complex tasks. While some errors can occur, they are often due to the LLM generating incorrect Prolog code, which is visible and can be corrected.

Additional Information

The project supports Python version 3.10 and newer. For the Python library mode, an API key for OpenAI or Anthropic is needed. The project can be installed using pip, with different options depending on whether you need support for OpenAI, Anthropic, or both. The MCP server can be set up through direct installation, Docker, or other tools, and it does not require an API key. The system is designed to be stateless, meaning each logic problem is solved independently, which helps in testing and ensures results are repeatable.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.