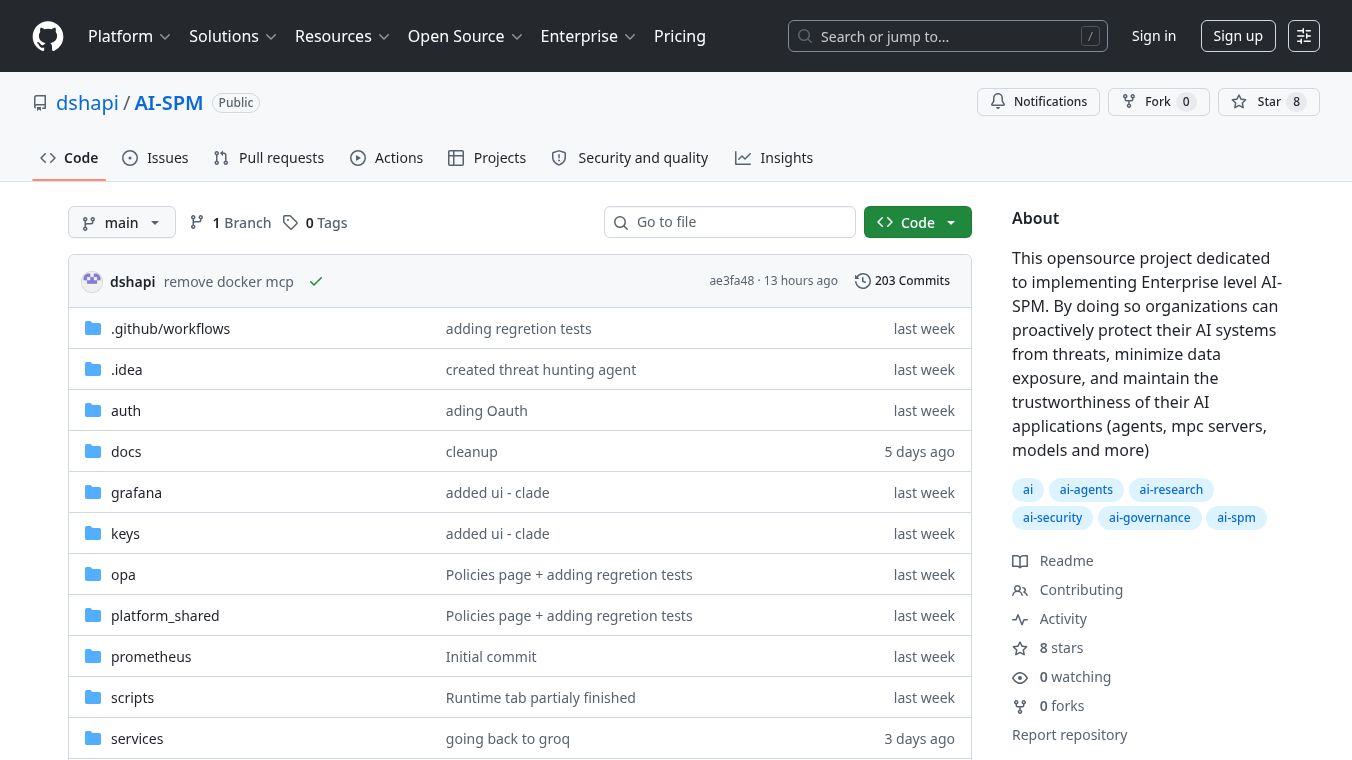

Orbix AI-SPM

Orbyx AI Security Posture Management (AI-SPM) is an open-source project designed to bring enterprise-level security to AI and machine learning systems. It acts as a comprehensive system for constantly checking, evaluating, and improving the safety of AI models, the data they use, and the technology that runs them. The goal is to find and fix potential weaknesses, incorrect settings, and dangers that come with using AI, while also making sure privacy and security rules are followed.

Benefits

Orbyx AI-SPM helps organizations protect their AI systems by finding AI models and related resources. It focuses on keeping data secure by addressing issues like data poisoning, bias, and privacy violations. The system helps users understand and deal with the most important AI risks first. It also makes it possible to quickly spot and react to unusual or suspicious activities happening within AI processes.

Use Cases

This platform can be used to discover and manage AI models, agents, and their resources within a company's environment. It's useful for protecting sensitive data used by AI, such as preventing poisoning or bias. Organizations can use it to prioritize which AI security risks to tackle first. It also helps in detecting and responding to threats in real-time within AI pipelines. The system includes an admin portal for managing security, visibility, and policy enforcement across different AI components. It also offers features for screening prompts, detecting prompt injection, and blocking risky requests based on a calculated risk score. Additionally, it scans AI outputs for sensitive information like API keys or personal data and manages AI models throughout their lifecycle.

Vibes

Orbyx AI-SPM is built with a microservices architecture, featuring 16 microservices, 6 OPA policies, and over 12 Kafka topics. It includes an administrative user interface and supports various models like Anthropic and OpenAI, as well as third-party imports. The compliance framework aligns with the NIST AI RMF. The system uses RS256 JWT authentication and Role-Based Access Control (RBAC) for security. It also ensures multi-tenancy from the start by scoping all data and events by tenant ID. For prompt and output security, it integrates Llama Guard 3 and scans for injection patterns. It also detects intent drift and scans for secrets and PII in LLM responses. The platform supports tools like web search and web fetch with authorization for their execution. Conversation history is stored for 30 days in Redis for context, with integrity verification and namespace scoping. It provides metrics for risk scores and enforcement actions, along with Grafana dashboards for AI security posture and compliance. An audit log in PostgreSQL tracks activities, and behavioral analytics detect anomalies. The infrastructure uses Kafka as an event bus. Installation requires Docker Desktop, Git, and Make, with commands likemake upto start all services. A threat hunting agent continuously scans for threats using LangChain, Groq, and Llama 3.3 70B. The tech stack includes Docker, Kafka, Redis, PostgreSQL, OPA, Prometheus, Grafana, Python, and React/Vite.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.