Observational Memory by Mastra

Observational Memory by Mastra is a new type of memory system designed for AI agents. It works by learning from information in a way that is similar to how humans process what they see and hear. This system is text-based and does not need special databases to work. It has shown excellent results on tests, performing at about 95% accuracy on the LongMemEval benchmark. It also works well with systems that store common AI responses to save time and money, and its code is available for anyone to use.

Benefits

Observational Memory helps AI agents remember and use large amounts of information efficiently. It turns a lot of text into short, easy-to-understand notes, much like how our brains filter information. This makes it easier for agents to recall important details without getting overwhelmed. The system is also designed to be very predictable, which allows for consistent saving of AI responses, reducing costs and speeding up interactions.

Use Cases

This memory system is useful for AI agents that handle a lot of information quickly. For example, a coding agent could use Observational Memory to keep track of user requests, priorities, and important details from a conversation, storing them in a log-like format. It's also beneficial for newer AI agents that can do many things at once, like browsing the web or writing code, as it helps manage the large amount of context they generate. The system is designed to work within the normal limits of AI models, making it practical for real-world applications.

Vibes

Observational Memory has demonstrated strong performance, outperforming other memory methods on the LongMemEval benchmark. It achieved scores like 94.87% with GPT-5-mini and 93.27% with Gemini-3-Pro-Preview, showing its effectiveness compared to standard approaches and other specialized memory systems.

Additional Information

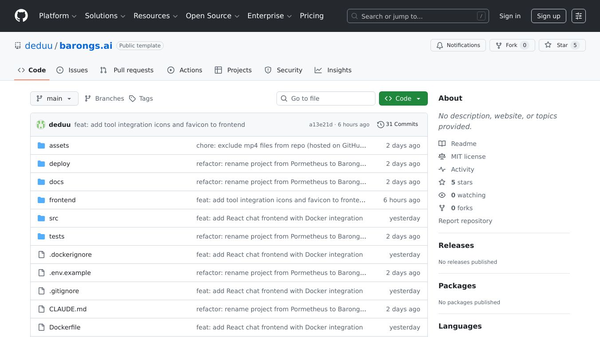

Observational Memory is an evolution of Mastra's earlier memory systems. It was developed to handle the challenges of modern AI agents that produce a lot of data quickly and require efficient memory management to control costs. The development is led by Tyler Barnes, a founding engineer at Mastra with experience in open-source tools and data processing.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.