Meta-Reasoning

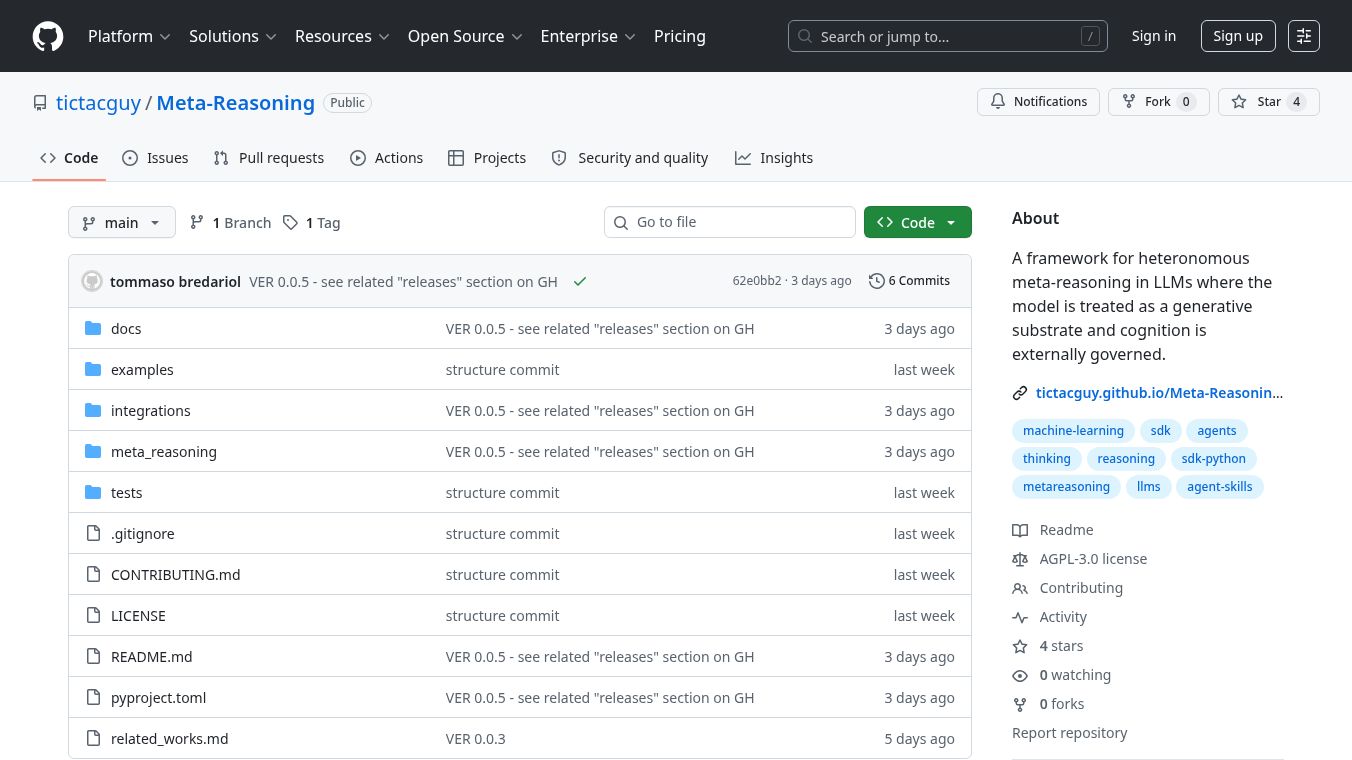

Meta-Reasoning is a software development kit (SDK) that offers a new way to think about how large language models (LLMs) reason. Instead of believing that LLMs have their own thinking abilities, Meta-Reasoning suggests that their reasoning is controlled from the outside. It sees LLMs as tools that follow instructions, with a separate system called a Cognitive Controller managing the thinking process. This controller watches and changes how the LLM reasons.

The system has three main parts. First, the Generative Substrate, which is the LLM itself, creates text but doesn't make decisions. Second, the Cognitive Controller focuses on the structure of the thinking, not the meaning of the words. It checks things like how varied the reasoning is, if it gets stuck, or if it finishes too early. Third, the Epistemic Ledger keeps a record of the thinking steps, strategies that didn't work, and ways to avoid repeating mistakes. This is different from just remembering information.

Key ideas in Meta-Reasoning include a special way for the LLM to show its thinking steps, like assumptions or deductions. It also has a list of defined cognitive moves and special commands called mutation operators. These operators can guide or restrict the LLM's reasoning, like forbidding certain steps or requiring the model to think deeper. The system also treats failures, like getting stuck, as important information to learn from.

Benefits

Meta-Reasoning provides tools to debug reasoning processes, allowing users to pause, examine, and even go back in the thinking steps. It lets you write reasoning rules as code, making them easy to manage and test. It also helps compare different LLMs based on how they reason, not just their answers. The SDK can identify a model's thinking habits, map out its failures, and ensure that reasoning is repeatable. It also helps prevent LLMs from making things up by controlling their thought patterns before they produce output.

Use Cases

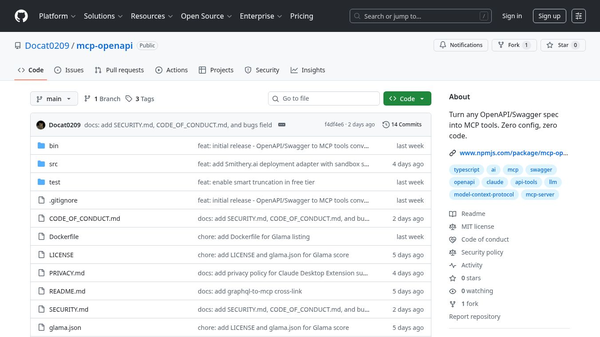

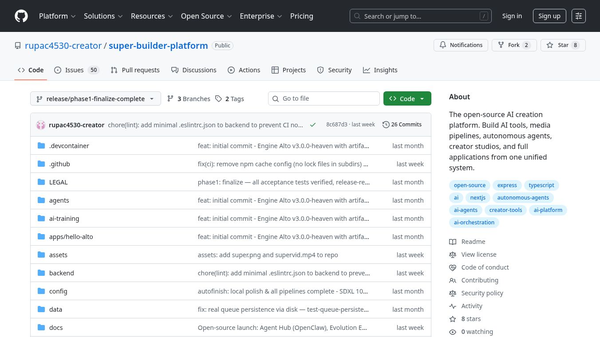

This SDK can be used to build more reliable AI systems. Developers can integrate it into their AI projects to gain better control over the LLM's reasoning. It's useful for testing and comparing different LLMs to see how they handle reasoning tasks. It can also be used to create AI that is less likely to generate incorrect information. The system supports various LLMs and can be integrated into development workflows for automated testing.

Pricing (ONLY include if available)

Information about pricing is not available in the provided article.

Vibes (ONLY include if available)

Information about public reception or reviews is not available in the provided article.

Additional Information (ONLY include if available)

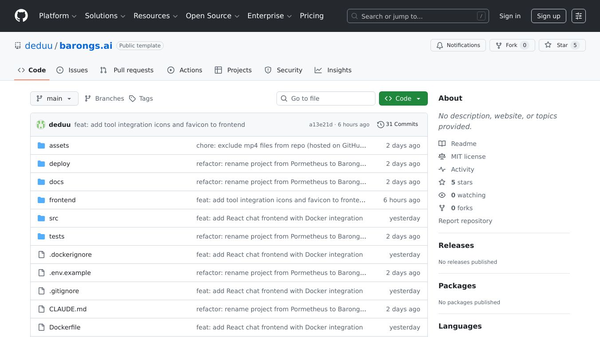

Meta-Reasoning can be installed using pip. The project includes examples for different backends. It offers a different approach compared to methods like Chain-of-Thought by managing reasoning externally. The license for this project is AGPL-3.0.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.