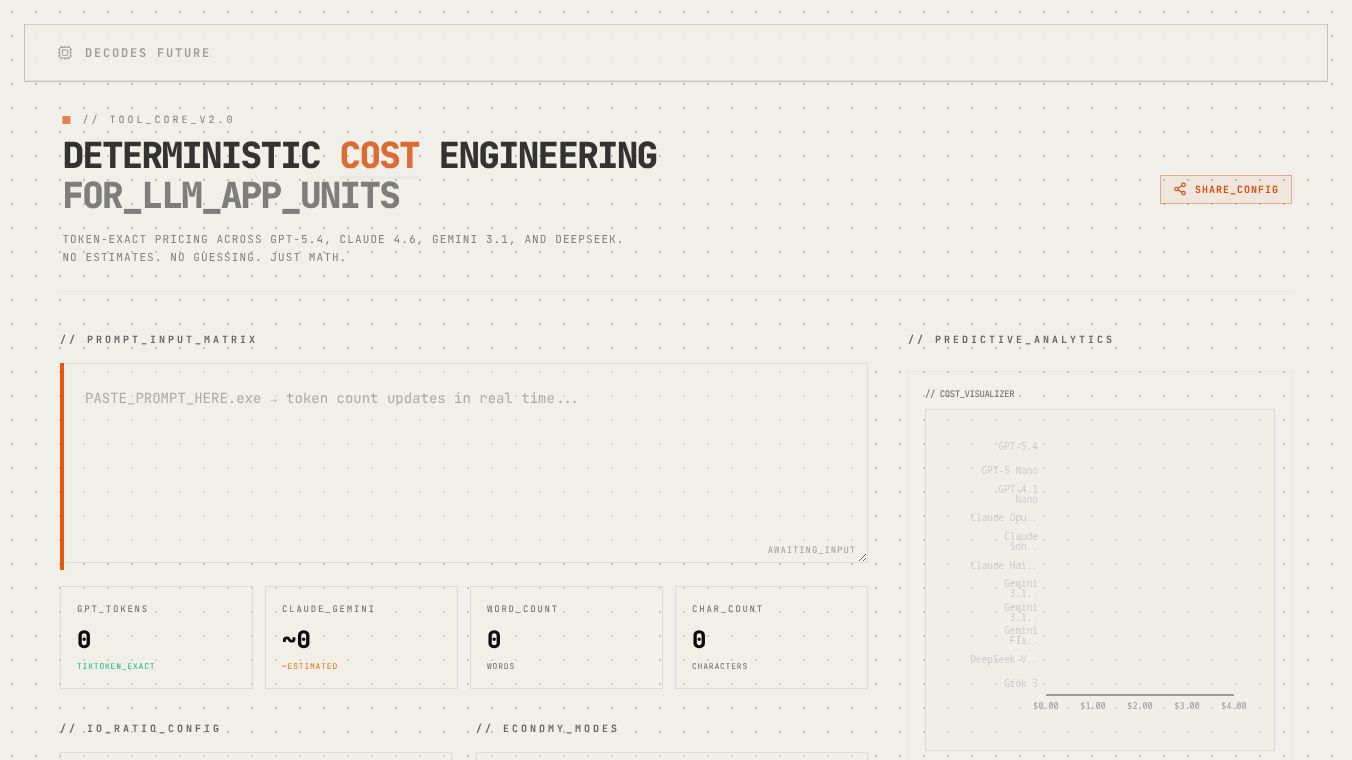

LLM Token & Cost Calculator

The LLM Token & Cost Calculator is a helpful tool that lets you compare prices for more than 200 different Large Language Models (LLMs). It helps you understand how much it will cost to use these AI models for your projects.

Benefits

This calculator makes it easy to see the costs associated with different LLMs. You can paste your text into the tool to count how many tokens it contains and figure out the exact cost. It also allows you to compare prices per million tokens, helping you find the most budget-friendly options. The tool clearly shows the input and output costs for each model, making it simple to make informed decisions.

Use Cases

Anyone working with Large Language Models can benefit from this calculator. It's useful for developers, businesses, or individuals who need to estimate the cost of using AI for tasks like content creation, data analysis, or customer service. By understanding token counts and costs, users can choose the right LLM that fits both their needs and their budget. For example, you can see that GPT-5 from OpenAI costs $1.25 for every million input tokens and $10.00 for every million output tokens.

Vibes

This calculator provides clear pricing details, showing input and output costs per million tokens for various models. It highlights the most affordable choices, making cost comparisons straightforward.

Additional Information

Tokens are the basic building blocks of text that LLMs understand. A token can be a word, part of a word, or punctuation. On average, four characters in English make up one token. It is important to know that different models use different ways to count tokens, so the count might vary slightly between providers like OpenAI, Anthropic, and Google. The cost of using an LLM depends on how much computing power it needs and what it can do. Bigger, more advanced models like GPT-4 and Claude Opus cost more because they require more processing. Smaller models like GPT-4o-mini or Claude Haiku are cheaper but might not be as good for very difficult tasks. Input tokens are the text you give to the model, and output tokens are what the model gives back. Most companies charge more for output tokens because they take more computing effort. When estimating costs, it is a good idea to expect that the model's response might sometimes be longer than your original request.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.