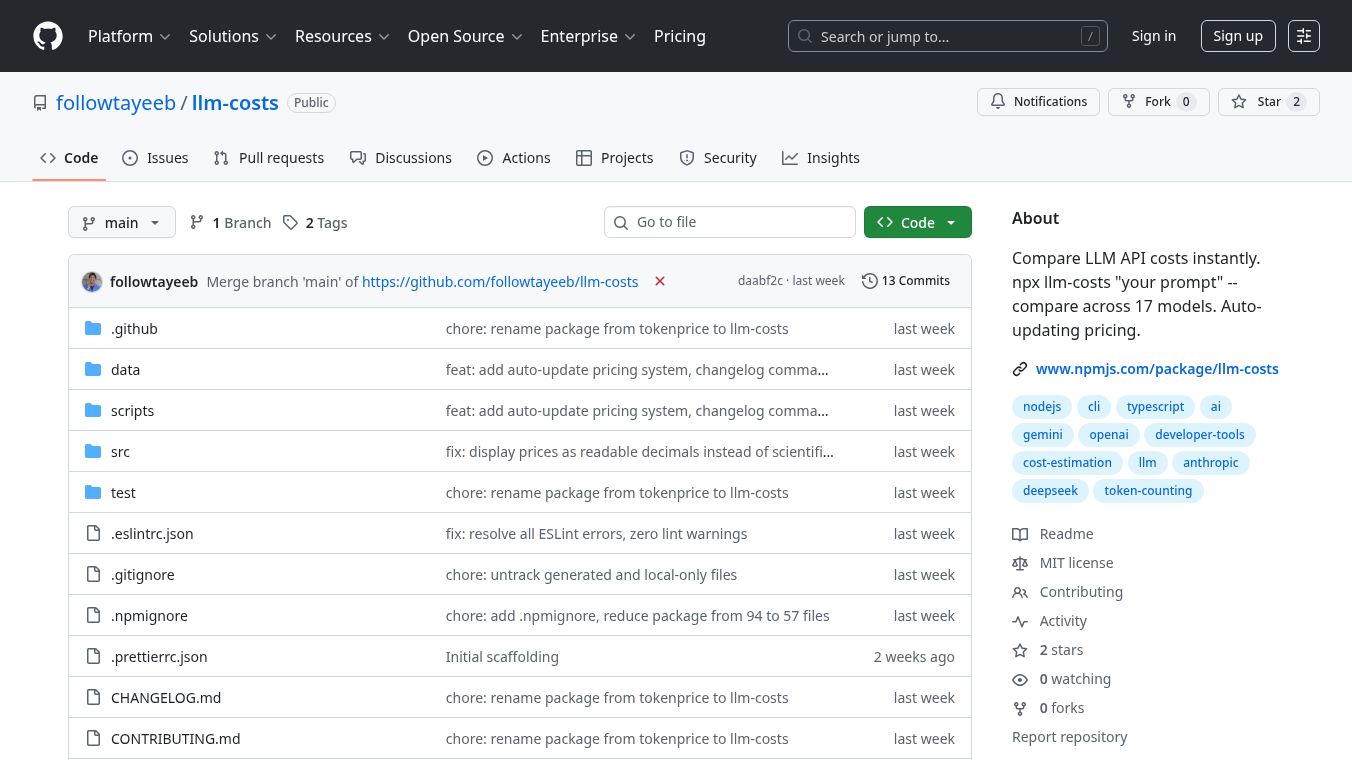

llm-costs

llm-costsis a tool that helps you figure out how much it will cost to use different artificial intelligence language models. It works right in your computer's command line, so you don't need to do any complicated setup or sign up for accounts. You can easily see the estimated costs for sending prompts to various AI models and getting answers back.

Benefits

llm-costsmakes it simple to understand and manage your spending on AI language models. It offers a quick way to compare prices across many different models from companies like OpenAI, Anthropic, and Google. The tool helps you avoid unexpected bills by showing you the estimated cost before you use a model. It can also count the number of words or pieces of text (tokens) in your prompts, which is important for understanding costs. For automated tasks, it can even stop processes if costs go too high, helping you stay within budget.

Use Cases

This tool is useful for anyone who uses large language models regularly. Developers can use it to choose the most cost-effective model for their applications. Researchers can compare the cost versus performance of different models for their experiments. Businesses can use it to plan their AI budgets and monitor spending. You can use it to compare all available models for a specific question, get a detailed cost breakdown for one model, or just count the tokens in your text. It can also process multiple prompts from a file to estimate total costs and help project your daily, weekly, or monthly expenses.

Pricing

llm-costsitself is a free, open-source tool. The costs it helps you estimate are for using the various large language models themselves. For example, using Anthropic's Claude Opus 4.5 might cost around $15 for every million input tokens and $75 for every million output tokens. OpenAI's GPT-4o could cost $2.50 per million input tokens and $10 per million output tokens. Google's Gemini 2.5 Flash is much cheaper, at $0.075 per million input tokens and $0.30 per million output tokens. These prices are estimates and can change.

Vibes

Users appreciate thatllm-costsis easy to set up and use directly from the terminal. They like that it supports a wide range of popular AI models and provides clear cost comparisons. The ability to integrate it into automated workflows and prevent unexpected expenses is also a significant plus.

Additional Information

llm-costsis built using Node.js. The project is open source, meaning anyone can contribute to improving it, such as adding support for new models or fixing bugs. The developers have a plan to add more features in the future, like better integration with other tools and extensions for popular code editors.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.