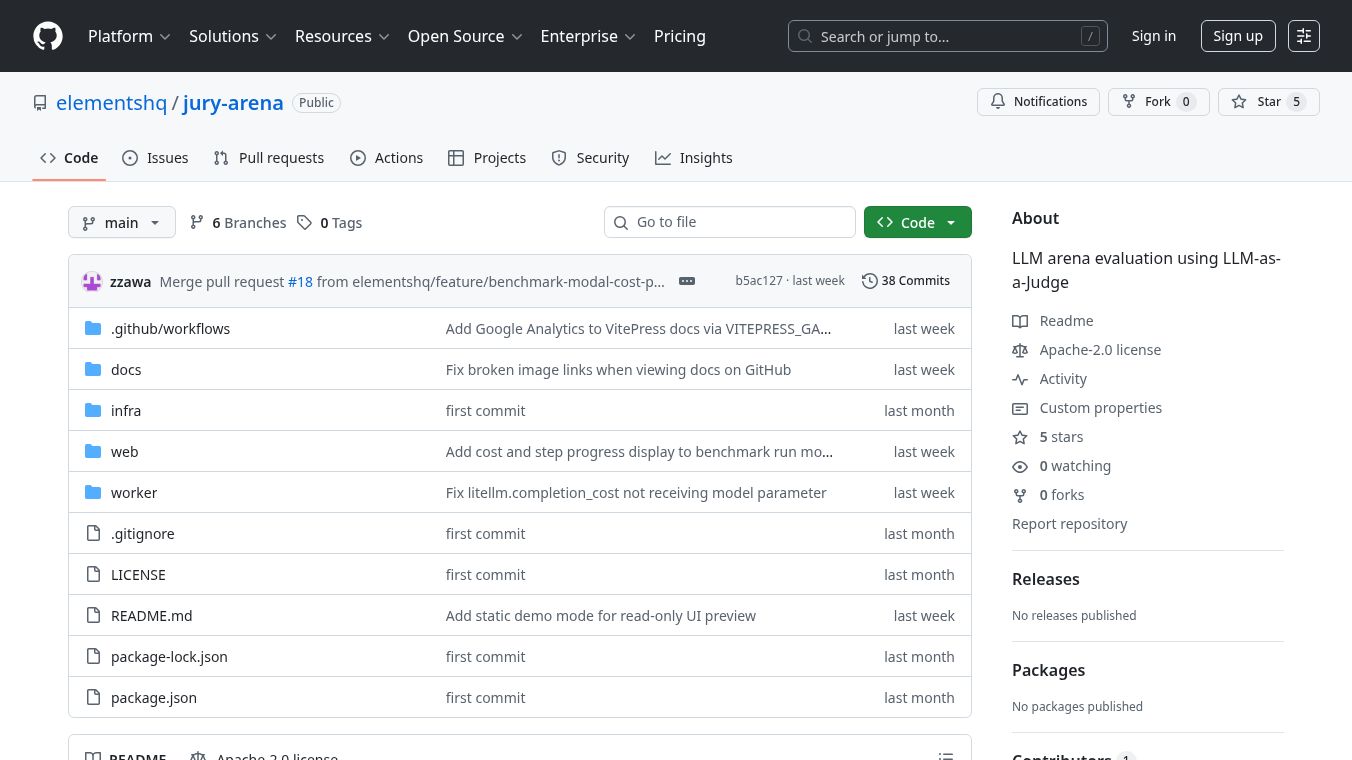

JuryArena

JuryArena is an open-source tool that helps compare different Large Language Models (LLMs). It works like an arena where models go head-to-head using real prompts from actual work. This means you don't need to have perfect answers beforehand to see which model performs better. It's designed to show how well models handle tasks as they would in the real world.

Benefits

JuryArena allows for the comparison of model response quality without needing pre-defined correct answers. It uses an LLM to judge the quality of responses, making evaluations more practical. Models are ranked based on their performance in one-on-one matches, with rankings updated live. The tool can handle subjective quality assessments and supports evaluating tasks that involve documents like PDFs. It also offers multi-judge consensus, where multiple judges can review responses, and results are decided by a majority vote. Users can choose between Elo and Glicko-2 rating systems to match their budget and accuracy needs. All details about judgments, reasoning, costs, and how long each response took are recorded for later review.

Use Cases

This tool is useful for anyone needing to evaluate and compare LLMs. It can be used to test models with production logs, which can be uploaded as JSONL or ZIP files. It's particularly helpful for tasks like Retrieval-Augmented Generation (RAG) and answering questions based on documents, especially when file attachments like PDFs are involved. The system can also handle multi-turn conversations, making it suitable for chatbot evaluations.

Vibes

Users can upload their own production logs to get started quickly. Sample templates are available to make the process easier.

Additional Information

To use JuryArena, you need Docker, Docker Compose, and Node.js v24.x or later. After setting up, you configure API keys for LLM providers and define the models you want to compare. The system then runs using Docker Compose. The tool's architecture includes a Web layer for the user interface and orchestration, and a Python Worker layer for handling LLM interactions and calculations. A PostgreSQL database is used for caching data.

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.