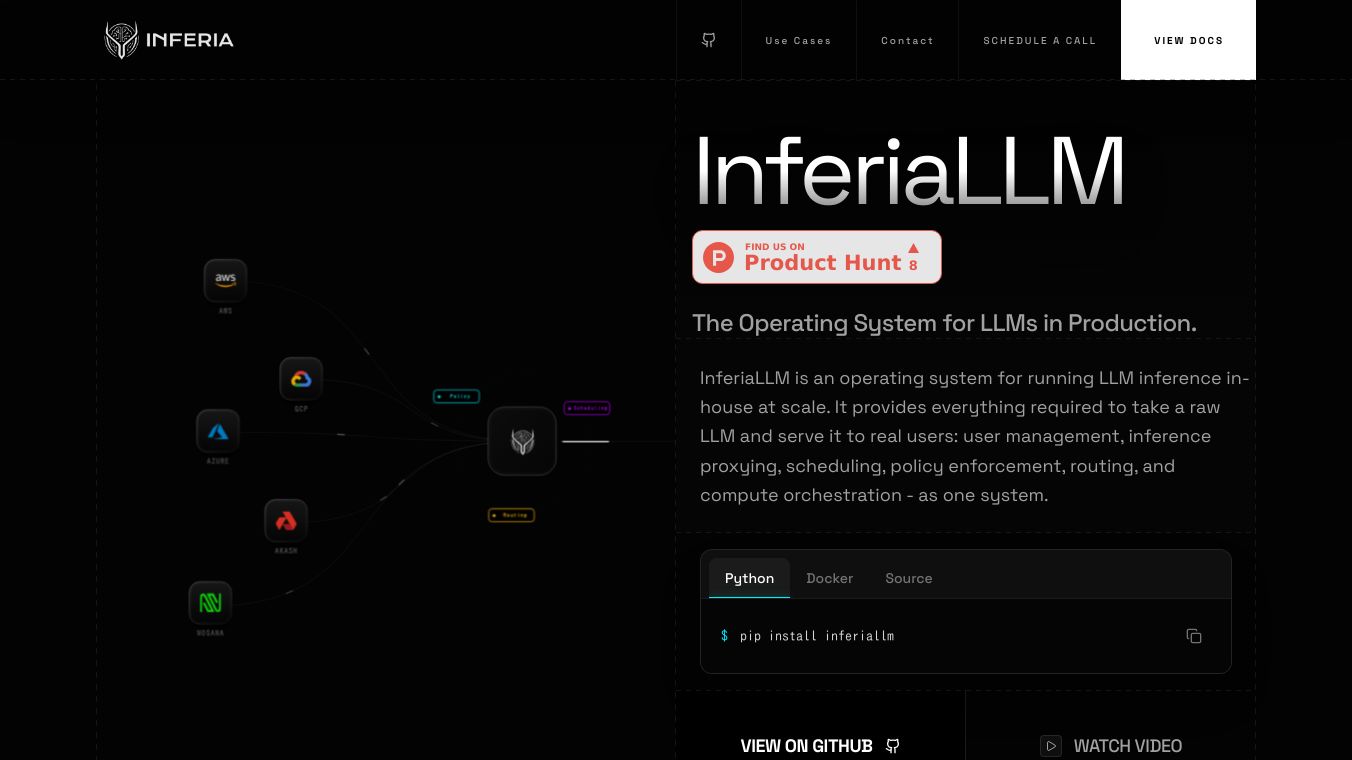

InferiaLLM

The problem InferiaLLM addresses is the necessity for teams to build a complex internal platform from scratch to serve LLMs in production, which typically involves components like an inference proxy, user management, authentication, request scheduling, routing infrastructure, GPU orchestration, and audit logs. InferiaLLM aims to replace this custom-built internal platform.

Benefits

InferiaLLM simplifies the process of making LLMs available to users. It takes care of many complex tasks that would otherwise need to be built from scratch, like managing users, directing requests, and organizing computer resources. This allows teams to focus on using LLMs rather than building the underlying infrastructure. It also supports running LLMs on various types of computer systems, giving flexibility in how and where models are deployed.

Use Cases

InferiaLLM is ideal for situations where secure and private use of LLMs is important. This includes law firms working with sensitive client information, healthcare organizations needing to protect patient data while complying with HIPAA, and financial companies that must follow strict rules for AI systems. It's also useful for any enterprise that wants to avoid building its own complex LLM serving system or for government entities that need to keep data within their own control due to national security or compliance rules.

Vibes

(No information available in the article)

Additional Information

(No information available in the article)

This content is either user submitted or generated using AI technology (including, but not limited to, Google Gemini API, Llama, Grok, and Mistral), based on automated research and analysis of public data sources from search engines like DuckDuckGo, Google Search, and SearXNG, and directly from the tool's own website and with minimal to no human editing/review. THEJO AI is not affiliated with or endorsed by the AI tools or services mentioned. This is provided for informational and reference purposes only, is not an endorsement or official advice, and may contain inaccuracies or biases. Please verify details with original sources.

Comments

Please log in to post a comment.